|

World Conquest

July, 2004 | ||||||

| Sun | Mon | Tue | Wed | Thu | Fri | Sat |

| 1 | 2 | 3 | ||||

| 4 | 5 | 6 | 7 | 8 | 9 | 10 |

| 11 | 12 | 13 | 14 | 15 | 16 | 17 |

| 18 | 19 | 20 | 21 | 22 | 23 | 24 |

| 25 | 26 | 27 | 28 | 29 | 30 | 31 |

because ... well ... why the hell not ...?

it's a dirty job, but somebody's got to do it.

- Saturday, July 31st

- 21:04PM

- 21:04PM

- Hold the Universe in the Palm of Your Hand:

100 megaparsec cube of the universe realized in crystal formThe always amazing and creative Bathsheba Grossman (from whom I've gotten several bronze sculptures) has come out with some new creations in crystal, not least of which is this 2-1/4" by 3" three-dimensional map of the known structures in a 100 megaparsec cube of space.

Besides being generally cool, this is an intriguing way to appreciate the "foamy" nature of the universe at large scales.

- Sunday, July 25th

- 1:02AM

- Daily Camera:

Origami Boulder with

bamboo display standToday's award for "most brilliant marketing idea since the Pet Rock" goes to the Origami Boulder Company who offers you, the would-be-enlightened consumer of Japanese-themed decor, the opportunity of a lunchtime to purchase a genuine, hand-wadded, original origami boulder. (Oooh! Ahhh!)

Sure, you could try to make your own origami boulder and, after sufficient trial and error, maybe you could make one nearly as detailed and boulder-like as the ones from the Origami Boulder Company, but why?

well, yeah, "to save ten bucks," could be one reason, but for the convenience-oriented would-be-origami-boulder-owner, isn't it nice to know you can now get one delivered to your door with just a click of your mouse?

- Saturday, July 24th

- 2:51AM

- Headshots:

Got a message the other day from someone letting me know that he'd shot me in the head. I'm guessing this happens to me more often than it does to most other people.

All in a day's work, however, when you're a terrorist in a video game simulated hostage scenario.

One advantage on that project was that, even though I had to fall down plenty of times (without padding and generally on metal floors with other hard objects around) depending on how I was being killed, most of the scenarios took only one take.

Some may say that variety may be the spice of death--at least in a video game--but I can tell you that it helps distribute your bruises more evenly.

Which is a good thing. Take my word for it.

- Friday, July 16th

- 14:02PM

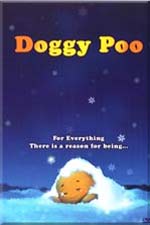

- For Everything, There is a Reason for Being...:

One of the distributors we've been talking to this week is International Licensing and Copyrights, Ltd of the UK. They seem like a pretty cool company with a wide variety of appealing products. I'm hoping we'll be able to do business.

One of their titles, Doggy Poo particularly caught my eye:

(though it's not a movie I would ever have thought to make)

A Doggy poo feels all alone and worthless as he finds out he is not needed for anything. He gets rejected and jeered constantly, which forces him to despair. As spring comes, Doggy poo meets a sprout of dandelion. The dandelion tells him that she needs him in order to become a beautiful flower and Doggy poo finally understands there is a purpose for his life as well. He surrenders his body to the dandelion and dreams a beautiful dream of becoming a flower.

God has not created you for no reason He must have a good plan for you.

Special features: The making of Doggy Poo & Picture Gallery

When you think about it, there's no end to what you can read into that metaphor. I'm sure there's a message in there for all of us, if not several messages.

- Two things worry me, however:

- 1) "The making of Doggy Poo"

- (I'm just not sure I'm ready to see that particular behind-the-scenes featurette)

- 2) How long can it be before one of the big studios decides to do a live-action version of Doggy Poo?

- (but if they cast Tom Hanks in the title role, I'm sure it'll be a hit)

- Saturday, July 10th

- 17:34PM

- Soft Cell:

Got a letter the other day from Bill DeLisi, President and CEO of Everspring Productions, writing about a past blog entry in which I'd mentioned the Amazing Hands-Free Cellphone Panda.

We noticed you have our Cell Bear on your website, which we do appreciate. We also noticed that you have a dead link to one of our distributors who can no longer carry our products. Could you please change the link from Evertek to EspringUSA ( http://www.espringusa.com/espringusa_web_site_1_005.htm ) We are the manufacturer, and now the exclusive carrier. Apparently your introduction to our bear is gaining quite a following, and Evertek tells us they are getting calls from angry viewers of your site because they cannot purchase them.

Sincerely, Bill DeLisi

President and CEO, Everspring Productions

I like Evertek, so I hate to be causing them a deluge of angry calls from frustrated would-be purchasers of this fine cell phone accessory. (In case you're unfamiliar with the hands-free panda, I'll mention that among its many fine features, the hands-free panda's mouth will move along with the voice of the person you're talking to, which seems especially appropriate for when you get calls from any of your friends who happen to look like pandas, so you can see why it would be in demand.)

"Grrrr! Arrrgh!

Fear my terrifying fruit scent!"If you're looking for a mobile cellphone accessory that's even more fearsome than a panda, Maxspeed performance, Inc., will be happy to sell you this terrifying Cell Phone Activated Dinosaur. The Cell Phone activated Dinosaur lights up, roars, and emits a powerful fruity smell when you get a call on your cell phone. According to Maxspeed, it also lights up, roars, and emits a powerful fruity smell when you call out on your cellphone, which to my mind is a somewhat less useful feature. Usually when you're dialing out, you already know about it, and do you really want to greet everyone you call with the sound of a roaring purple dinosaur in the background?

Nonetheless, I think this could be the beginning of a whole new trend. Like a lot of people, I'm used to being alerted to automotive events by smell--the smell of burning oil, the distinctive aroma of charred electrical insulation, the light scent of an overheating belt, or the tangy odor of engine coolant spilled on a hot block. Why not have other odor-intensive indicators? Seems to me that this would be particularly well-suited to cellphone applications, especially if you could rig different odors to work with caller ID.

*snif* *snif* - smells like Steve's on the line; guess I'd better get that.

- Friday, July 9th

- 23:51PM

- Take This Test:

Wish I'd come up with the idea of doing this as a website. I used to do something similar live, but I didn't have nearly as extensive a collection.

- Tuesday, July 6th

- 19:22PM

- Market So:

I know it's still several months away, but in case you're hitting the 25th annual American Film Market in Santa Monica, November 3rd-10th, 2004, I thought I'd let you know that we'll be back in office number 525 once again. Whether you've come up the elevators or just jogged up the main flight of stairs from the lobby, we'll be right there in the corner once again.

Can't give you an update yet on what new films we'll be introducing at the upcoming market, but stay tuned....

- 18:21PM

- Safe Circus:

Looking for an inexpensive, yet vaguely unsettling circus experience? Look no further than the Circus of Disemboweled Plush Toys - is it Live or is it Dismemberex?

- 17:55PM

- Lord of the Files:

Granted, this is old news by now (I'd been meaning to comment on it last week, but somehow things got--or stayed--busy), but I was reading a press release from Lineox that they've added Global File System (GFS) support. It's been a while since I've looked into this sort of thing. For starters, it looks like RedHat went and gobbled up Sistina while I wasn't paying attention.

I've implemented cluster file systems for test purposes before, but whenever I've looked into the implementations previously available, there were always enough "gotchas" that I didn't think they were practical for putting into service out here.

Even so, there's an inherent coolness to a fault-tolerant "floating" storage pool that's not tied to a single server and supports equal and simultaneous access from whatever machines are authorized to connect to the data.

Since the aforementioned Sistina gobblement, RedHat will now sell you their package for a mere $2,200 (Red Hat Enterprise Subscription required). I've generally had the impression that Red Hat wants to be the Microsoft of Linux, and at their current enterprise pricing structure, I'd have to spend some more time with the back of an envelope to figure out whether Red Hat is still cheaper than going with some flavor of Microsoft's Advanced Server or Datacenter product line.

Which brings us back to Lineox, who offers what is essentially a clone of RedHat for the more cheapskate-friendly price of ten euros. That leaves only the "gotcha" that I bet it's still like using Red Hat, but these days I imagine everybody but me would consider that to be a feature.

Back when I'd done may playing with cluster filesystems, I'd implemented them on a plain-old wide (single-ended at that point) SCSI bus, which is fundamentally limiting both because of the number of available IDs once you start parcelling them out between storage devices and host adapters, and because of the limited physical size that's practical with single-ended SCSI.

Nowadays, however, fiber channel--at least the 1Gbps flavor--is cheaper than dirt. I've got a few hundred fibre drives, up to 73GB, and plenty of switches and host adapters. Only thing I don't have is the spare time to play with fun stuff like this. It's sad, but true, I don't really need to implement a shiny new cluster file system for my house and even a few racks of 73GB fibre drives on a SAN, while cool, is not nearly as practical for the video work that I do as a single, simple fileserver running an array of 250GB IDE drives ($129 apiece this week at CompUSA, no rebates and no limits).

It's still tempting, but I know it's a project that would take quite a bit of time and not really get me any significant practical benefits over the fileserver I'm setting up now.

- Sunday, July 25th

|

Trygve.Com sitemap what's new FAQs diary images exercise singles humor recipes media weblist internet companies community video/mp3 comment contact |

|

|